Retrying requests

16 minute read

What you’ll learn

In this guide you’ll learn:

- Why you need implement retrying

- Transient Vs Permanent error conditions

- Approaches to retrying

- Solution considerations when retrying

Why you need to retry

At the time of writing there is no system on earth that is 100% available, even enterprises like cloud providers or financial institutions plan for some degree of downtime, and that’s before we even consider unplanned outages.

With all this in mind, whether you are building into Marketplacer, or some other provider, you will need to implement a retry strategy for some or all of your API calls because: everything fails eventually.

Transient vs permanent errors

Before we further discuss why you should implement retries, it’s worth defining the 2 general classes of error that may cause you to retry (or not!):

- Transient error conditions are those scenarios where retrying the same request again at a later point in time would likely result in a successful outcome. Examples of this type of error would be:

- Temporary network unavailability

- Rate limits have been hit

- Some other reason related to platform availability

- Permanent error conditions are those scenarios where retrying the same request again at a later point in time would continue to result in an unsuccessful outcome. Examples of this type of error are:

- Malformed payload body

- Incorrect authentication credentials

- Some other data validation issue

From a retry perspective, transient and permanent error conditions are treated differently:

- With calls that fail for transient reasons, you just need to retry that same call at a future point in time, and more than likely it will succeed (although not always!)

- Calls that fail due to permanent errors are generally considered harder to retry, as you would usually need to change something about the original call in order for it to succeed.

We’ll discuss strategies for handling both these error classes later in this article.

Reference Architecture

While retrying API calls is a ubiquitous topic, from time to time we’ll want to reference a real world example, and what better example to use than integrations into Marketplacer.

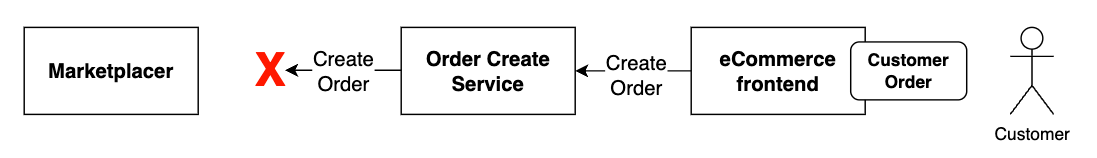

The architecture below depicts a simplified view of typical integrations into Marketplacer where we have:

- A seller making API calls to update their products

- An operator making API calls to create orders

Complete Integration View

This is clearly a simplified view of what we’d expect to see from both a seller and operator perspective. For an overview of what you typically expect from a complete integration perspective, please refer to the following playbooks:

Extending this diagram slightly to provide a (very simplified) view of the technical participants in this integration we have the following:

Here we can see:

- There is a whole range of network and other infrastructure between the services making the API calls, and the Marketplacer service serving those calls.

- The endpoint for these calls is an API provided by Marketplacer

Any and all of these components can behave in a way (planned or unplanned) that can cause an API call to fail. We’ll detail some of these scenarios as we move through the playbook to illustrate how retrying could be implemented.

Retrying in a distributed architecture

Even monolithic systems running on bare metal that make no external calls can experience scenarios where retrying may be appropriate, e.g.:

- A thread my deadlock

- The local DB may crash

However, for the remainder of this article we are going to be more focused on distributed solution architectures. For the purposes of this conversation we’ll define a distributed architecture as:

Any solution architecture where you have multiple independent systems (or services) connected over a network working together as a coordinated system.

Implementing retries in a distributed architecture (such as our reference example) is critical for the following reasons:

1. The network is unreliable

In distributed solutions, components communicate over the network - which we have to expect to be unreliable and unpredictable at points. Even healthy networks may:

- Drop packets

- Timeout temporarily

- Return transient 5xx errors

- Hit rate limits

2. Retries absorb transient faults

We’ve already mentioned transient faults - those that are temporary in nature, for example:

- A service may be restarting

- A service may be unavailable before auto-scaling kicks in

- Service or network rate limits may have been hit

We must expect these to occur from time to time, so we must plan to handle them.

3. Services depend on each other

While we attempt to make services as autonomous as possible (this is one of the touted benefits of implementing microservices) ultimately those services do depend on each other for some reason.

In our example, the operator could perhaps still take customer orders via their eCommerce frontend, but ultimately they will eventually need to create those orders in Marketplacer so that sellers can fulfil them.

Implementing retries in such a scenario maintains the overall integrity of the system, but moreover it means that dependent systems (in our example the operators order create and other upstream services) will not fail.

Ensuring that components don’t fail when those that they depend upon are unavailable also helps avoid cascading service failures.

4. Graceful degradation

Implementing appropriate retry strategies (e.g. exponential backoff) helps avoid overwhelming a service that is already unable to service requests - i.e. you’re not making a bad situation worse.

Inappropriate retry strategies in this scenario (e.g. immediately retrying forever), will continue to apply unnecessary pressure on the impacted system, and could potentially lead to further (cascading) failures, or have “noisy neighbor” impacts when dealing with multi-tenant environments.

5. User experience

Already touched upon in point #3 (Services depend on each other), without retries you risk the overall integrity of the system from a business perspective, specifically data integrity. This can lead to things like customer dissatisfaction.

In our example this could be that from a customer perspective payment has been taken and an order has been created in the eCommerce system, meanwhile the order has not made its way to the seller for fulfillment.

Now that we have made a case for retries let’s look at some of the strategies you employ when implementing.

Approaches to retrying

The following are a selection of retry methods that can be implemented (sometimes in combination) to fit your retry use-cases.

This is a generic discussion on retrying, where there are Marketplacer specific considerations we’ll call those out.

1. Respect retry-after headers

Error class: Transient

A retry-after header will be issued when an endpoint cannot service a request, this can be for number of reasons but 2 common examples are:

- When rate limits have been hit

- When a service is otherwise unavailable (e.g. restarting or auto-scalling)

Marketplacer will usually issue retry after headers with the following HTTP status codes for all of its API endpoints:

- 429 Too Many Requests response

- 503 Service Unavailable response.

The header will contain either:

- A time in seconds after which it is safe to retry

- A data / time after which it is safe to retry

If no retry-after header is provided then the default Marketplacer retry after value is 60s.

You should always honor the retry-after header.

2. Retry with exponential backoff

Error class: Transient

Instead of retrying immediately or at fixed intervals, you can wait increasingly longer between retries. This prevents overwhelming the server or network, giving it more of a chance to recover.

Example pattern:

- Retry 1: wait 1s

- Retry 2: wait 2s

- Retry 3: wait 4s

- and so on up to your max limit of retires

If a

retry-afterheader has been issued you should respect that first, or use it as a guide on which to base your backoff strategy.

Marketplacer webhook retries

When issuing webhooks, Marketplacer adopts the Exponential Backoff pattern when webhooks fail. You can read more about that strategy here.3. Add jitter (random delay)

Error class: Transient

Jitter adds randomness to your backoff intervals to avoid too many clients retrying at the same time.

If a

retry-afterheader has been issued you should respect that first, or use it as a base on which to add a randomized component.

4. Limit the number or retires

Error class: Transient / Permanent

You should not retry an unlimited number of times, there comes a point when you have to accept that retrying is not going to work, and the call should be marked as failed. At this point you should consider your Cleanup strategy.

In terms of setting the maximum retry limit, that will depend on the use-case and the class of error, e.g.: Permanent error types may have a lower max retry number when compared to transient errors.

5. Only retry transient errors

Error class: Transient

Don’t retry on all failures, or at least start by implementing retries on transient error classes before moving on to dealing with permanent errors (they are typically harder to manage).

Examples of transient errors include but are not limited to:

- HTTP 429 Too many requests (aka rate limiting)

- HTTP 5xx (server errors)

- Some other form of network timeout

Examples of permanent errors include but are not limited to:

- HTTP 4xx errors (not including HTTP 429)

- API validation errors

- This is especially important when dealing with GraphQL APIs - see below

Errors in GraphQL

Unlike REST based APIs, GraphQL APIs do not typically issue HTTP 4xx response codes to denote error conditions. This is by design, and Marketplacer follows this design for its GraphQL based APIs.

Instead of issuing 4xx responses, a GraphQL API will return HTTP 200 along with an error object that contains field and message fields. When considering handling GraphQL errors you need to ensure you query this object as part of your payload response.

You can read more about errors in GraphQL by reading our GraphQL Best Practices Guide.

6. Track retry context

Error class: Transient / Permanent

It goes without saying that when implementing retries you will need to track the following:

- Attempt count

- Last error

- Time of last attempt

- Retry-after

- Outcome

Language specific frameworks aimed at providing out the box retry capabilities will often handle this for you.

7. Short-circuit / circuit breaker

Error class: Transient

Another approach taken to avoid overloading an endpoint under stress is to adopt the Circuit-breaker pattern, at a high level this works as follows:

- Open “the circuit” after failure

- Wait for a cooldown period (this could be the retry after value)

- Open the circuit to allow a test call

- Close the circuit if it succeeds

8. Consider idempotency

Error class: Transient / Permanent

Idempotency refers to the property of an operation where making the same request multiple times has the same effect as making it once.

Retries can cause side effects if the API call isn’t idempotent. Always ensure the operation being retried can be safely repeated.

In our example we have 2 use-cases:

1. Seller updating products

Retrying the same update operation (and succeeding multiple times) would not result in the advert changing beyond the first successful update (aside from perhaps the updatedAt timestamp) - this is considered idempotent.

2. Operator creating products

Retrying the same order create operation (and succeeding n times) would result in n orders - this is considered non idempotent.

The point here is that you need to be aware when retrying the consequences of those operations if they happen to occur more than once.

9. Queue and remediate

Error class: Permanent

The majority of the approaches to retrying so far have related to ways you can retry if you experience transient errors. Boiling it down it’s really just:

- Waiting for some time period (based on some algorithm or retry after value)

- Making the same call again

This approach will not work for permanent errors, retrying the same request again is likely to have the same failure outcome.

Order creation example

For example, in our architecture if the operator issues an API call to create an order, and some form of API validation fails, e.g. the order has a duplicated external ID attached to it, no amount of waiting and retrying the same call is going to work.In the above example you could:

- Just fail the order create request and move to clean up, or:

- Place the request into some kind of “triage queue” and enact some form of business logic on it depending on the validation error (in our example you may attach the correct external ID.)

- You would then retry the request when it had been remediated

The point here is that permanent errors need to be treated with more sophistication and complexity than transient errors. The reasons for failure are more nuanced, and so are the methods to remediate. Queuing these requests for further processing is 1 way to handle these types of retries.

10. Cleanup

Error class: Transient / Permanent

As the old saying goes:

“If at first you don’t succeed: try, try again. Then give up. There’s no point in making a fool of yourself.

This is true of retrying API calls, indeed as discussed, it is actually part of a responsible approach to retrying.

So accepting that even after retrying, a call may ultimately fail, we need to compose an approach to clean up. Some things to consider here may be:

- Finalizing the status of the call as “failed”

- Generating some kind of exception report for manual intervention

- Notification or alerts

- Attempt rolling back partially completed steps

- Queuing somewhere for further retries

The last point is essentially “retrying the retries”, and should be done by exception. For example, you may expect most transient errors to be successfully catered for in your standard retry approach. You may however experience exceptional circumstances (disaster type scenarios) where the transient outage is much longer than you’d typically experience, and therefore the standard retry approach fails.

In these exceptional circumstances you may wish to enact the retry process at a later point in time.

Solution design considerations

In this last section we look at some high-level solution design patterns you could adopt when designing your retry approach, specifically we’ll focus on how retries could be stored. We’ll keep this technology-agnostic, and focus more on general concepts.

1. In-memory

In this approach any retries that need to occur would be managed entirely in-memory.

✅ Pros:

- Simple to implement

- Fast retry (no I/O)

- Used for short-lived or idempotent tasks

❌ Cons:

- Lost on crash or restart (so not useful when attempting to retry failed orders for example!)

- Not shared across service instances

- Not suited to for long or deferred retries (e.g. if you were using an exponential back off strategy)

2. Persistent cache

In this approach we store retries in a persistent cache that is local to the service to help us address some of the cons with the In Memory approach.

✅ Pros:

- Survives restarts

- Relatively simple

- No network overhead

❌ Cons:

- Not shared across service instances

- Limited scaling

3. Message Queue / bus

In this approach we consider the use of a message bus as the platform to manage our retry requests

✅ Pros:

- Decouples retry producer from the re-trier (this may be subjectively a pro!)

- Can scale

- Built in durability, ordering, and retry mechanics

- Suitable for background or async retired (i.e. persistent type faults)

❌ Cons:

- Increased complexity (and cost)

- May introduce latency (this may not be an issue for some retry approaches)

- Needs retry logic consumers (again increased complexity)

4. Dedicated retry service

There are a number of technology-specific retry frameworks available that may suit your needs. In terms of storage options many of these support both in-memory and data persistence options. Examples include: Hangfire (.NET), Quartz (Java) and Celery (Python & other language variants)

✅ Pros:

- Implements common retry algorithms for you

- Usually includes monitoring, dashboards etc

❌ Cons:

- Adds 3rd party dependencies to your code (tighter coupling to a framework / tool)

Summary

The options we have covered are summarized below:

| Retry need | Solution Option |

|---|---|

| Low-stakes, short-lived | 1. In-memory |

| Must survive a restart | 2. Local cache |

| Shared, scalable, async | 3. Message queue |

| Complex retry control | 4. Dedicated framework |

Worked Example

The following examples in both c# and JavaScript (NodeJs) demonstrate some of the concepts covered in this article, specifically:

- Using the

retry-afterheader as a guide on when to retry next - Applying a default retry period when a

retry-afterheader has not been supplied - Tracking the retry context

Disclaimer

The provided code examples have been provided for illustrative purposes only. For further information on the terms of use, please refer to the License.Code

Note: if not done so already you’ll need to install either the .NET SDK or NodeJS to work with the examples.

using System.Net;

string url = "http://localhost:5117/serviceavailability";

//string url = "http://localhost:5117/ratelimit";

int maxRetries = 5;

int attempt = 0;

int defaultWaitTime = 60;

using HttpClient client = new();

while (attempt < maxRetries)

{

attempt++;

Console.WriteLine($"Attempt {attempt}...");

HttpRequestMessage request = new(HttpMethod.Post, url)

{

Content = new StringContent("{\"example\":\"data\"}", System.Text.Encoding.UTF8, "application/json")

};

HttpResponseMessage response = await client.SendAsync(request);

if (response.IsSuccessStatusCode)

{

Console.WriteLine("Request successful!");

break;

}

if (response.StatusCode == HttpStatusCode.TooManyRequests || response.StatusCode == HttpStatusCode.ServiceUnavailable)

{

if (response.Headers.TryGetValues("Retry-After", out var values))

{

var retryAfterValue = values.FirstOrDefault();

if (int.TryParse(retryAfterValue, out int seconds))

{

Console.WriteLine($"Retry-After header found. Waiting {seconds} seconds...");

await Task.Delay(TimeSpan.FromSeconds(seconds));

continue;

}

if (DateTimeOffset.TryParse(retryAfterValue, out var retryDate))

{

var waitTime = retryDate - DateTimeOffset.UtcNow;

if (waitTime > TimeSpan.Zero)

{

Console.WriteLine($"Retry-After until {retryDate}. Waiting {waitTime.TotalSeconds:F1} seconds...");

await Task.Delay(waitTime);

continue;

}

}

}

// No Retry-After or parsing failed — use default backoff

Console.WriteLine($"No valid Retry-After found. Waiting default {defaultWaitTime} seconds...");

await Task.Delay(TimeSpan.FromSeconds(defaultWaitTime));

}

else

{

Console.WriteLine($"Request failed with status code {response.StatusCode}. Not retrying.");

break;

}

}

Console.WriteLine("Finished.");

//const url = 'http://localhost:5117/ratelimit'; // Replace with your endpoint

const url = 'http://localhost:5117/serviceAvailability'; // Replace with your endpoint

const maxRetries = 5;

const defaultWaitTime = 60000;

const delay = ms => new Promise(resolve => setTimeout(resolve, ms));

async function postWithRetry() {

let attempt = 0;

while (attempt < maxRetries) {

attempt++;

console.log(`Attempt ${attempt}...`);

try {

const response = await fetch(url, {

method: 'POST',

headers: {

'Content-Type': 'application/json'

},

body: JSON.stringify({ example: 'data' })

});

if (response.ok) {

console.log('Request successful:', response.status);

break;

}

if (response.status === 429 || response.status === 503) {

const retryAfter = response.headers.get('retry-after');

if (retryAfter) {

let waitTime = 5000; // fallback to 5 seconds

if (!isNaN(retryAfter)) {

waitTime = parseInt(retryAfter) * 1000;

} else {

const retryDate = new Date(retryAfter);

const now = new Date();

const diff = retryDate - now;

if (diff > 0) waitTime = diff;

}

console.log(`Received Retry-After: waiting ${waitTime / 1000} seconds before retrying...`);

await delay(waitTime);

continue;

}

console.log(`No Retry-After header. Waiting default ${defaultWaitTime/1000} seconds...`);

await delay(defaultWaitTime);

continue;

} else {

console.error(`Request failed with status ${response.status}. Not retrying.`);

break;

}

} catch (error) {

console.error('Network or other error:', error.message);

break;

}

}

console.log('Finished.');

}

postWithRetry();

License

MIT License

Copyright (c) 2024 Marketplacer Pty Ltd

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.